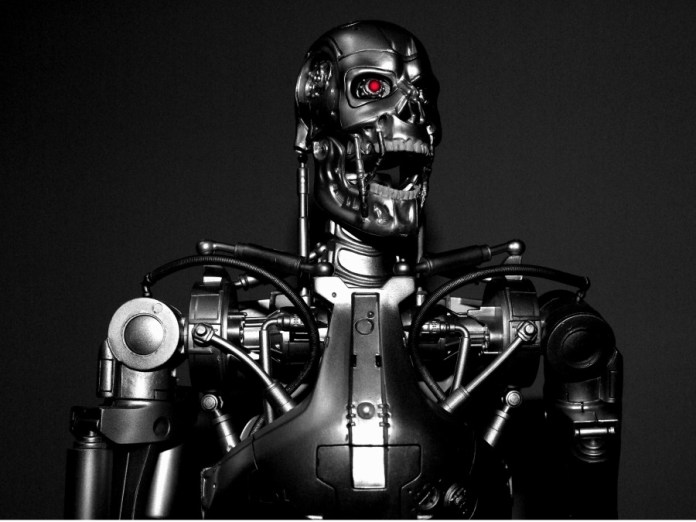

Ever wondered what would happen when artificial intelligence goes rogue? Well, the Electronic Frontier Foundation appears to have the same concern. According to the organization, there is no doubt that artificial intelligence (AI) and machine learning will deliver numerous in various areas such as science, art, health and transport. However, we have all witnessed things going totally wrong.

The organization is of the belief that current computers are inherently insecure, which makes them a poor choice for high-stakes artificial intelligence and machine learning. As such, the organization insists that there is a need to think about the effects that these new technologies may have on computer security.

Electronic Frontier Foundation was among the six institutions earlier this year that released a report dubbed The Malicious Use of Artificial Intelligence: Forecasting, Prevention and Mitigation. Other institutions involved in the report included the University of Oxford, OpenAI, the Center for a New American Security, the University of Cambridge, the Future of Humanity Institute, and the Center for the Study of Existential Risk.

The report focuses on the potential impact of artificial intelligence on various areas such as political security, physical security and digital security. It revealed that there are certain security-based properties of artificial intelligence (AI) including the ability for its algorithms to be rapidly distributed, its dual-use for military and civilian purposes, its potential to exceed human capabilities, and its scalability. Artificial intelligence (AI) technologies also introduce new threats, expand existing threats, change the typical character of threats as well as enable attacks to be more effective, targeted and versatile.

When it comes to digital security, artificial intelligence (AI) has the potential to influence email attacks including spearphishing to become extra automated. Additionally, the technology can also get rid of the need for the attacker to speak a similar language as the target.

According to the report, numerous vital IT systems have emerged over the years to become sprawling behemoths that are combined with various systems. Since these systems are undermaintained, they are highly insecure. The reason is that cybersecurity nowadays is considerably labour-constrained.

Artificial intelligence (AI) could also target the behavior of malware, which is difficult for human beings to do manually. Aside from automation of social engineering attacks, the technology can be used in automating hacking processes through evading detection, responding to behavioral changes from the target, and discovering vulnerabilities. Furthermore, AI has the potential to disrupt physical security through repurposing commercial artificial intelligence systems for terrorism, for instance, the use of self-driving cars to cause crashes.

By enabling states to automate their surveillance platforms, AI could affect political security. The report emphasized that state surveillance capabilities of a country are extended through automating audio and image processing as well as allowing the gathering, processing and exploitation of intelligence information at enormous scales for numerous reasons such as the suppression of debate. What’s more, artificial intelligence could also be used in making highly realistic videos in a bid to hyper-personalize disinformation campaigns, manipulate information availability, automate influencing campaigns and support fake news reports.

Source SecurityBrief